3. The quality of your data is of utmost importance

Bad data can be worse than NO data.

Making sure your web data is trustworthy at scale is incredibly important, so one of the defining success factors is a comprehensive quality assurance (QA) process. The best web scraping tech stack in the world is useless if data integrity can’t be trusted.

At scale there should be comprehensive plans that incorporate fully automated data quality and monitoring strategies. Incorrect data can have far reaching impacts on upstream and downstream systems consuming the data.

QA happens at several parts of the data stack:

testing and monitoring of the spiders and tech to ensure technical operation is maintained and data flows are as expected, and

testing of the data itself from deduplication, checking error rates, data quality, etc.

At the smaller scale, monitoring and QA sometimes can be checked manually by “eyeballing the data” or manually auditing data, especially if it’s infrequent and small amounts. But what happens when you collect 10 million product prices or 100 million real estate listings? You must have specialized tools and resources to evaluate and monitor quality. Like your development team, QA has associated costs, delivery time implications, setup costs, etc.

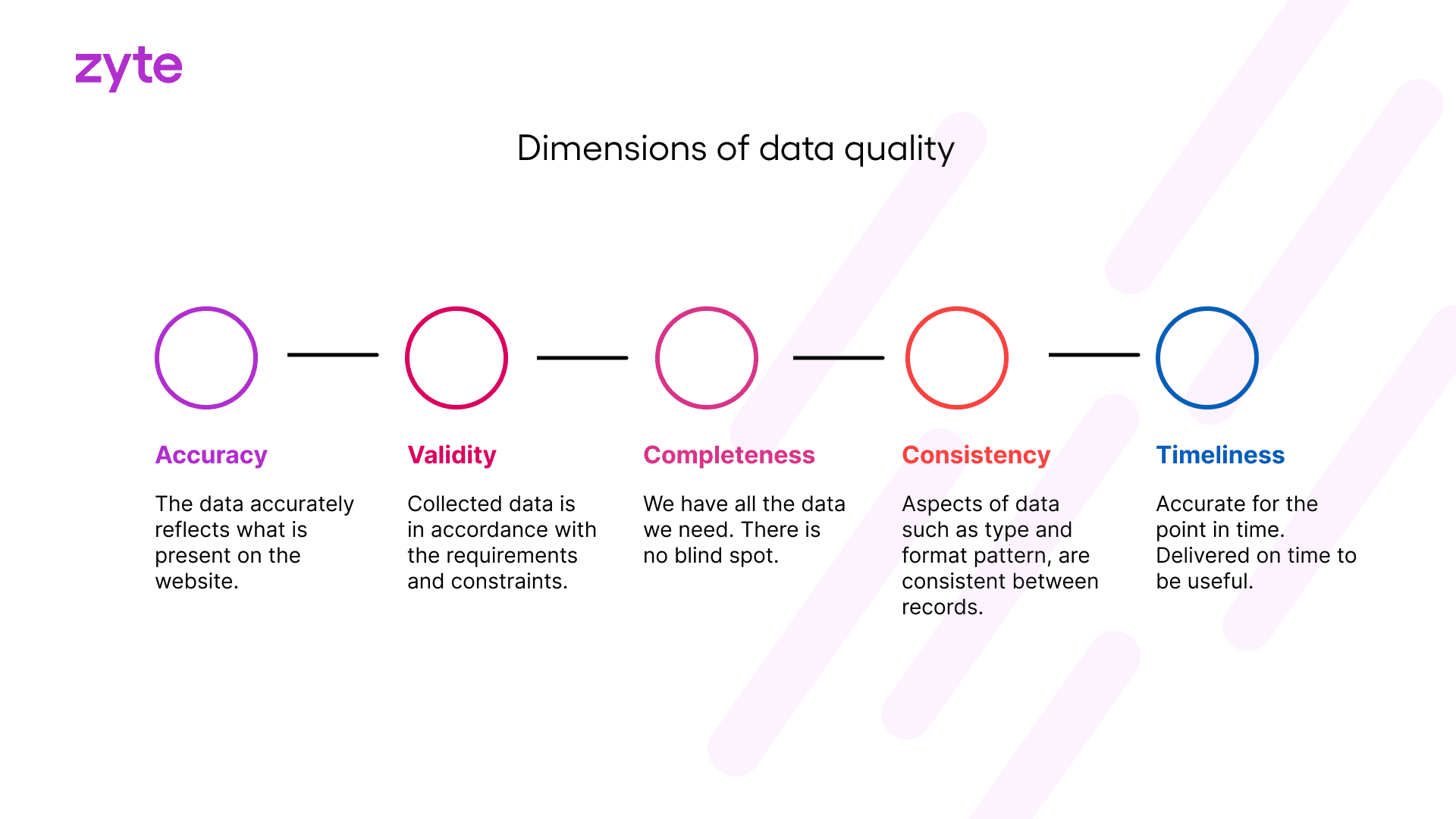

The dimensions of data quality

The data quality dimensions you’ll want to check are

accuracy,

validity,

timeliness,

completeness,

uniqueness, and

consistency.

QA has a larger function than just checking for duplicates or errors, it’s about data integrity and ensuring its accuracy for downstream consumption.

Continue to the next chapter 4. Scaling and maintaining crawling and extracting solutions

_HFpro5d6k3.png&w=256&q=75)

_E4PyVpfAxa.png&w=256&q=75)

-(1).png&w=1920&q=75)

-(1)_VZGHqxCgXV.png&w=1920&q=75)